1. Executive Summary

As of 2026-04-26 (JST), AI shifted its spotlight from “performance” alone to building “safety and operations that work on the ground.” OpenAI released OpenAI Privacy Filter as open weights for PII detection and masking, accelerating the move toward integrating privacy into systems from the start—not as an afterthought. In addition, OpenAI began offering ChatGPT for Clinicians for free (for verified individual healthcare professionals in the US), broadening the entry point for healthcare operations. In parallel, NVIDIA updated its NIM VLM-related documentation, providing changes in a way that helps adopters track what’s changed.

2. Today’s Highlights

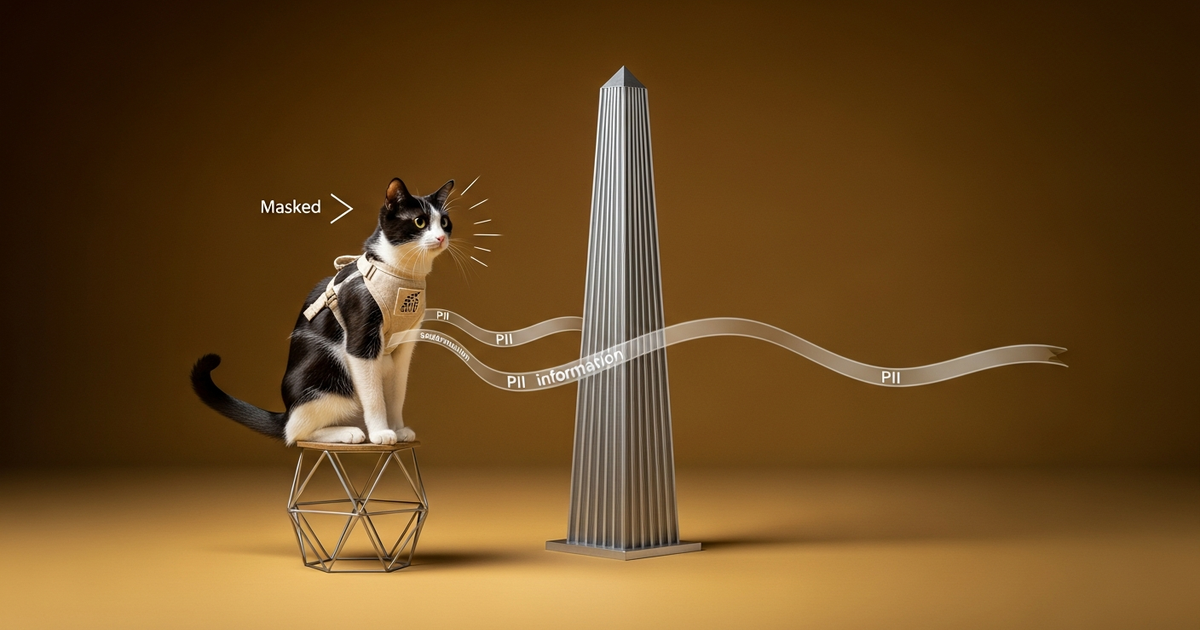

Highlight 1: OpenAI releases the open-weight “OpenAI Privacy Filter” for PII detection and masking

Summary

OpenAI released OpenAI Privacy Filter to detect personal information (PII) in text and mask (or redact) it. It emphasizes that it is a compact model designed for local execution and can be applied to high-throughput privacy workflows. It is also provided under the Apache 2.0 license, with a design that makes it easy for developers to integrate into their own environments. (openai.com)

Background

With the spread of generative AI, the places where text circulates—such as logs, knowledge bases, search indexes, and learning pipelines—have increased. As a result, “where PII gets introduced and at which stage protection should be applied” has become a core product-design issue. In the past, PII detection has often relied on rules centered on formats such as email addresses and phone numbers. In real-world operations, however, personal information that depends on context (boundaries between public and non-public information, ambiguous phrasing, variations in how information is described, etc.) tends to be more problematic. OpenAI positions Privacy Filter as a model aimed at performing such “context-aware” PII classification. (openai.com)

Technical Explanation

In the explanation, Privacy Filter adopts “bidirectional token classification + span decoding” and is based on a classification taxonomy for privacy labels such as personal identifiers, contacts, addresses, private dates, account numbers for accounts/cards, and secrets like API keys and passwords. Additionally, during inference it uses a constrained decoding procedure to convert token predictions into coherent spans. (openai.com)

On the evaluation side, it argues that implementers can achieve a balance between “accuracy and on-the-ground adaptability,” citing F1 (including the correction benchmark) on the PII-Masking-300k benchmark and improvements via domain adaptation with small amounts of data. (openai.com)

Impact and Outlook

The impact of this release isn’t limited to having more PII filters. Because it is open-weight and intended for local execution, companies can more easily incorporate “protection without external transmission” into their design. As a result, the flexibility in architectural choices increases—deciding where to ensure privacy such as LLM preprocessing (input stage), storage (logs/indexes stage), and review (human verification stage).

Going forward, it’s likely that operational requirements like the granularity of PII detection (span-level), compatibility of masking specifications, and auditability (when and on what rationale masking occurred) will become competitive factors centered around Privacy Filter. (openai.com)

Source

OpenAI official blog “Introducing OpenAI Privacy Filter”

Highlight 2: OpenAI offers “ChatGPT for Clinicians” for free for healthcare professionals (US—verified individual users)

Summary

OpenAI released ChatGPT for Clinicians and began offering it for free to verified individual healthcare professionals in the US (physicians, NPs, PAs, pharmacists). It aims to support work related to documentation and medical research in the healthcare domain, reducing time constraints and administrative workload in clinical settings. (openai.com)

Background

In healthcare, in addition to the care itself, administrative tasks and documentation, compliance responses, and the ongoing tracking of ever-increasing medical papers and guidelines can become “hidden bottlenecks.” OpenAI explains this in the context of US healthcare being under strong pressure and clinicians looking toward AI tools (the gist being that physician utilization increased in a 2026 survey). (openai.com)

It also clearly defines the offering format as “free” and “for verified individual users,” lowering adoption barriers and creating a stage where users can “try it in the field first.” (openai.com)

Technical Explanation

Technically, ChatGPT for Clinicians is positioned as optimized for clinical tasks, with the offering focused on documentation assistance and medical research support. Additionally, the release article includes explanations that mention the GPT‑5.4 family as the model(s) and refers to benchmarks for medical tasks (HealthBench). (openai.com)

Furthermore, it states that before the release, medical advisors tested many conversations in daily work (clinical care, documentation, research), and that the design includes protections such as ensuring conversations aren’t used for training and incorporating MFA. This suggests a stance that connects safety design to operational deployment. (openai.com)

Impact and Outlook

In healthcare, far more than with general-purpose chat, areas are held to strict requirements for “the cost of errors,” “handling of data,” and “auditability.” While free availability may look like a light initiative at first glance, in practice it can function as an “entry point where feedback from the field is easier to collect.”

As a result, failure patterns in generating clinical documents, summarization, and research assistance (ambiguous context, misuse of terminology, keeping up with guideline updates, etc.) may be visualized earlier, potentially improving product safety and quality.

In the future, the focus will be whether it moves toward replacing not just isolated tasks but “business processes themselves” by integrating with healthcare institution workflows (existing EHRs, in-hospital documents, audit logs). (openai.com)

Source

OpenAI official blog “Making ChatGPT better for clinicians”

Highlight 3: Update trends in OpenAI research releases—privacy protection and product integration advancing in parallel

Summary

In OpenAI’s research/release list, during the relevant period, not only OpenAI Privacy Filter but also large-scale updates such as GPT‑5.5 are organized in chronological order alongside other releases. In other words, the list makes it clear that “building privacy-protection foundations” and “expanding model/product capabilities” are progressing in parallel within the same release cycle. (openai.com)

Background

In recent years, AI development has not been something completed solely through standalone improvements in model performance. In real deployments, surrounding factors—data protection, evaluation design, and ease of adoption (SDKs, APIs, local execution, auditability)—often determine the probability of product success. What OpenAI’s research release index shows is that these “surrounding factors” continue to be surfaced from the research side as well. (openai.com)

Technical Explanation

Privacy Filter is small and designed with local execution in mind, presenting elements such as a PII label taxonomy, constrained decoding, and benchmark evaluations. Meanwhile, the list also includes introductions of even larger models. Organizing research releases gives developers a path that makes it easier to choose the “required components (safety, capability, evaluation).” (openai.com)

Impact and Outlook

From a developer perspective, there is increasing room not only for model selection but also for rearranging components such as “input preprocessing,” “output postprocessing,” and “auditing and review.” Going forward, how far each company progresses toward “modularizing safety” may become visible not just as a rails-vs-guardrails debate, but as differences in implementation cost and time-to-adoption. (openai.com)

Source

OpenAI Research (Release Index)

3. Other News

Other 1: NVIDIA updates the Early Access release notes for NIM VLM (Visual Language Models) (updates on documentation)

NVIDIA has a release notes page set up for the Early Access documentation for VLM within NIM, and at least documentation updates (the display of Last updated) have been made. For adopters, this is important because it makes it easier to track changes at the early access stage (bug fixes and specification differences). Since VLM spans both vision and language, deployers are likely to stumble over assumptions during deployment (input/output formats, preprocessing, performance). Updates to the release notes are expected to reduce such uncertainties in implementation. NVIDIA NIM for Vision Language Models (Early Access) Release Notes

Other 2: NVIDIA NIM for Visual Generative AI continues updating documentation (Release Notes/Guides)

NVIDIA NIM for Visual Generative AI documentation includes pathways that directly connect to on-the-ground requirements such as Air-Gapped Deployment, performance, and Observability—not just model deployment. At minimum, the continued updates can be confirmed from the “Last updated” display. When handling generative AI in production, network constraints and operational monitoring (metrics, logs, observability) become decision points, so it is clear that preparations are continuing from an operations viewpoint rather than stopping at API delivery alone. NVIDIA NIM for Visual Generative AI documentation

Other 3: Anthropic announces event information for Google Cloud Next 2026 (official event page)

Anthropic has published an event page related to Google Cloud Next 2026. While it’s necessary to review the event details one by one, at least it serves as a signal of the “posture of connecting with the cloud platform ecosystem.” For enterprise users, model offering is not the only real adoption hurdle—how to proceed with deployment, operations, and integrations on the cloud (existing data/monitoring/security) becomes the practical barrier. Accordingly, such event collaborations can be seen as clues for an implementation roadmap. Anthropic “Anthropic at Google Cloud Next 2026”

Other 4: Apple ML announces “Apple Scholars in AIML 2026,” support for research talent in the AIML field

Apple Machine Learning Research announced the recipients of “Apple Scholars in AIML” 2026 (PhD fellowship). While such initiatives don’t directly affect “model performance,” they tend to become seeds for the inflow of research talent and for joint research in the long-to-medium term. The context suggests that past fellows produced papers and led to acceptance at major conferences, indicating that they maintain connections with the research community. Since the future outlook for AI development depends strongly on talent supply, it’s worth tracking the continuity of research support as a trend. Apple ML Research “Announcing the 2026 Apple Scholars in AIML”

Other 5: Apple ML publishes highlights of research presentations and participation at ICLR 2026

Apple Machine Learning Research summarizes research presentations at ICLR 2026, sponsorships, and participation in related events. Apple shares its research openly while also increasing touchpoints with the conference community, and the initiative is characterized by showing a breadth that spans from basic research to implementation-oriented work. For developers on the ground as well, it can help them get an early sense of which direction next research topics (e.g., representation learning, generation, inference, modality integration) are heading. Apple ML Research “Apple Machine Learning Research at ICLR 2026”

Other 6: OpenAI updates product-side release notes such as ChatGPT Enterprise/Edu (tracking features, rates, etc.)

OpenAI’s Help Center has a Release Notes page for ChatGPT Enterprise & Edu, with information organized along with update dates. For organizations and educational institutions, tracking such release notes is essential because feature differences, migration timing, and new pricing/rates directly affect operational planning. Even without reading every detail in the article set on the day, this collection is meaningful as primary information showing that “product operations updates are continuing.” OpenAI Help Center “ChatGPT Enterprise & Edu - Release Notes”

4. Summary and Outlook

What can be read from today’s set of primary information is that AI’s center of gravity is shifting from “model capabilities” to “conditions required for success in real environments.” OpenAI’s Privacy Filter is a representative example of this, clearly showing a direction of “distributing privacy protection as a mechanism.” In addition, the free offering of ChatGPT for Clinicians stands out as an effort to build a foothold for moving adoption forward in a high-cost area like healthcare.

Meanwhile, NVIDIA is improving the “feel” of deploying and operating VLM and generative AI through documentation updates. On the research community side, Apple continues talent support and conference participation, showing a flow that supports the next wave of technology from both human and information perspectives. (openai.com)

In the future (short term to the next few weeks), the key points to watch are: (1) to what extent handling of PII and sensitive information is replaced from “model functionality” to “surrounding foundations (preprocessing, auditing, local execution),” (2) whether adoption models such as free and verified offerings spread in heavily regulated, high-responsibility domains like healthcare, and (3) how much the deployment experience for VLM/generation improves with operations-oriented offering formats like NIM.

5. References

| Title | Source | Date | URL |

|---|---|---|---|

| Introducing OpenAI Privacy Filter | OpenAI | 2026-04-22 | https://openai.com/index/introducing-openai-privacy-filter/ |

| Making ChatGPT better for clinicians | OpenAI | 2026-04-22 | https://openai.com/index/making-chatgpt-better-for-clinicians/ |

| OpenAI Research (Release Index) | OpenAI | 2026-04-23 | https://openai.com/research/index/release/ |

| Release Notes — NVIDIA NIM for Vision Language Models (VLMs) | NVIDIA | 2026-04-20 | https://docs.nvidia.com/nim/vision-language-models/early-access/release-notes.html |

| NVIDIA NIM for Visual Generative AI — Docs | NVIDIA | 2026-04-07 | https://docs.nvidia.com/nim/visual-genai/latest/index.html |

| Anthropic at Google Cloud Next 2026 | Anthropic | 2026-04-22 | https://www.anthropic.com/events/anthropic-at-google-cloud-next-2026 |

| Announcing the 2026 Apple Scholars in AIML | Apple Machine Learning Research | 2026-04-21 | https://machinelearning.apple.com/updates/apple-scholars-aiml-2026 |

| Apple Machine Learning Research at ICLR 2026 | Apple Machine Learning Research | 2026-04-22 | https://machinelearning.apple.com/research/iclr-2026 |

| ChatGPT Enterprise & Edu - Release Notes | OpenAI Help Center | 2026-04-22 | https://help.openai.com/en/articles/10128477-chatgpt-enterprise-edu-release-notes |

This article was automatically generated by LLM. It may contain errors.