Executive Summary

From April 7-8, 2026, significant advancements were observed across various scientific and technological fields, particularly in the sophistication of AI’s autonomous behavior and the development of new materials pushing physical limits. Notably, dynamic control in non-stationary environments within robotics and the emergence of new memory technologies resistant to high temperatures are breakthroughs poised to reshape the foundation of next-generation computing. This article provides a comprehensive overview of these critical recent papers and considers their pathways to societal implementation.

Featured Papers

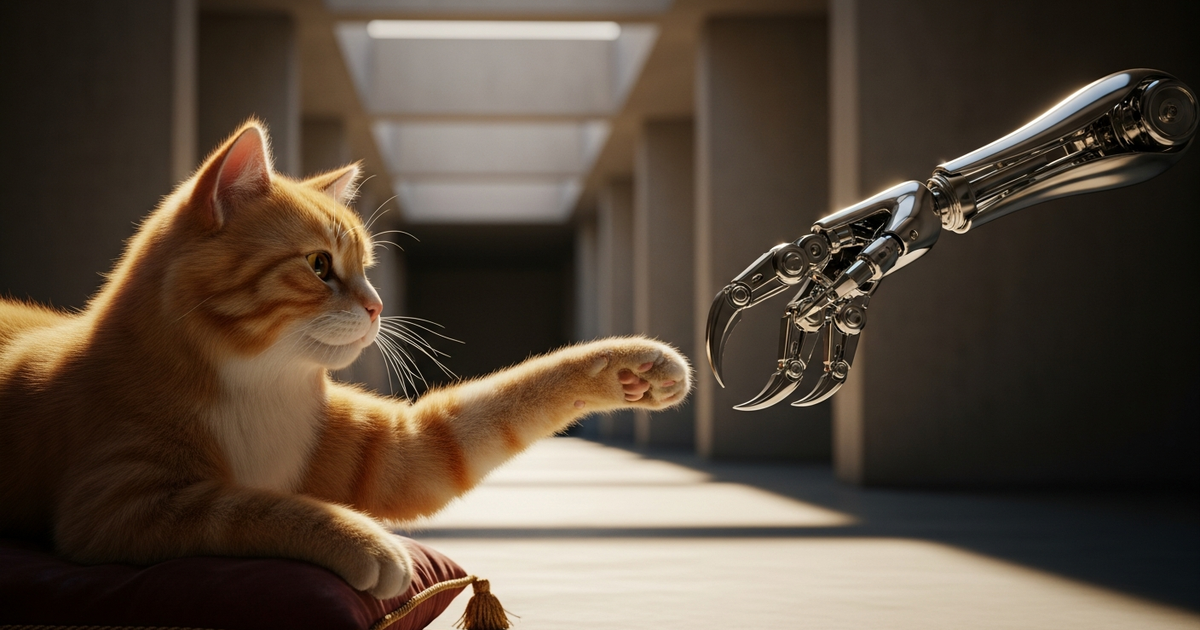

Paper 1: Belief Dynamics for Detecting Behavioral Shifts in Safe Collaborative Manipulation (Robotics & Autonomous Agents)

- Authors & Affiliation: Devashri Naik, Divake Kumar, Nastaran Darabi, Amit Ranjan Trivedi (Research institution related to computer science)

- Background and Research Question: Robots working in shared workspaces must safely collaborate with humans and other agents. However, if a collaborative partner changes their strategy mid-operation, the robot’s continued reliance on old assumptions increases the risk of collisions and accidents. This research addresses the challenge of how to quickly detect such “non-stationary behavior.”

- Proposed Method: A “belief dynamics” framework is proposed using probabilistic models to recognize changes in the collaborative partner’s behavioral patterns. Validation in the ManiSkill environment shows agents modeling the partner’s behavioral shifts in real-time and adjusting their own actions.

- Key Results: Compared to 10 other detection methods, adopting this framework successfully reduced the collision rate by 52% after the partner’s behavioral shift.

- Significance and Limitations: This is a crucial step towards safer human-robot collaboration in industrial settings. A limitation is that covering all patterns of highly complex human behavior is difficult, requiring validation with broader datasets.

- Source: Belief Dynamics for Detecting Behavioral Shifts in Safe Collaborative Manipulation

This research emphasizes how crucial inferring “what the other agent is likely to do” is for robots. While humans intuitively notice “oh, this person is acting differently today,” robots need to compute this mathematically. As this technology advances, collaboration with humans exhibiting irregular movements will become safer, not just in factories but also with care robots in homes.

Paper 2: Bridging Large-Model Reasoning and Real-Time Control via Agentic Fast-Slow Planning (Robotics & Autonomous Agents)

- Authors & Affiliation: Jiayi Chen, Shuai Wang, Guangxu Zhu, Chengzhong Xu (Systems control and AI-related)

- Background and Research Question: While Large Language Models (LLMs) possess high logical reasoning capabilities, they cannot match the millisecond-level response times required for robot control. The challenge is how to resolve the dilemma between inference “thinking time” and control “reaction speed.”

- Proposed Method: An agentic approach called “Fast-Slow Planning” is introduced. This system involves a slow LLM formulating high-level goals, with faster subsystems complementing these goals to perform real-time control.

- Key Results: Language instructions are instantly translated into physical actions, significantly improving success rates and response stability in complex manipulation tasks compared to conventional methods.

- Significance and Limitations: This is an extremely useful method for equipping AI with advanced intelligence and agile physical capabilities. However, the inference cost of LLMs remains high, necessitating further lightweighting for execution on edge devices.

- Source: Bridging Large-Model Reasoning and Real-Time Control via Agentic Fast-Slow Planning

This paper can be compared to the functioning of the human brain. We think deeply when solving math problems (slow) but move instinctively to dodge a thrown ball (fast). For robots, this “switching” is key to autonomy. This approach promises the emergence of robots capable of more human-like flexible movements.

Paper 3: When AI Improves Answers but Slows Knowledge Creation (Economics & Behavioral Economics)

- Author & Affiliation: Keh-Kuan Sun (Economics researcher)

- Background and Research Question: While AI adoption enhances individual productivity, what is its impact on long-term “creation of new knowledge”? This study analyzes AI’s role in the formation process of digital public goods (collective intelligence).

- Proposed Method: A mathematical model using matching theory is constructed to simulate the phenomenon where AI, while improving the efficiency of searching and integrating existing information, also substitutes for human exploratory thinking.

- Key Results: Increased answer accuracy through AI is beneficial in the short term, but by not burdening humans, it reduces opportunities for deep thinking, potentially slowing the growth rate of the public knowledge base.

- Significance and Limitations: This serves as a warning about the “negative side effects” of AI convenience on creativity. As it is based on a specific model, generalization to diverse real-world work environments requires caution.

- Source: When AI Improves Answers but Slows Knowledge Creation: Matching and Dynamic Knowledge Creation in Digital Public Goods

This is an interesting study empirically demonstrating the concern “relying too much on AI makes humans less intelligent” from an economic perspective. The phenomenon where people are satisfied with reading AI-summarized knowledge, thus shortcutting the original learning process, could be termed “knowledge hollowization.” It is essential to maintain the habit of posing one’s own questions while using AI as a tool for future learning.

Paper 4: Solving Problems of Unknown Difficulty (Economics)

- Author & Affiliation: Nicholas Wu (Theoretical Economics)

- Background and Research Question: This paper addresses how decision-makers should arrive at optimal solutions in economics and business when the difficulty of a problem to be solved is “unknown.”

- Proposed Method: A game-theoretic model focusing on the exploration-exploitation trade-off is constructed, proving the importance of early feedback when problem difficulty is uncertain.

- Key Results: It demonstrates that in cases of unknown difficulty, investing in obtaining information from the first attempt dramatically increases the probability of ultimate success.

- Significance and Limitations: Provides insights for project management in highly uncertain environments. The model is a purely theoretical construct and does not account for human psychological biases.

- Source: Solving Problems of Unknown Difficulty

When tackling unprecedented tasks like new technology development or venture management, this paper teaches the value of the “first step.” The idea that designing tests (experiments) to understand “what is not understood,” rather than immediately seeking answers to unknowns, is a shortcut to success is highly insightful.

Paper 5: Towards protein folding pathways by reconstructing protein residue networks with a policy-driven model (Life Sciences & Drug Discovery AI)

- Author & Affiliation: Susan Khor (Quantitative Biology)

- Background and Research Question: Elucidating the process by which proteins fold into specific three-dimensional structures from their amino acid sequences (folding pathways) is key to developing new drugs. However, computationally reproducing this dynamic process has been difficult.

- Proposed Method: A policy-driven generative model is used to propose a method for stepwise reconstruction of the interaction network between protein residues (amino acid units).

- Key Results: Compared to traditional static structure prediction, this method successfully simulates the dynamic folding process with high accuracy.

- Significance and Limitations: This deepens our understanding of diseases like Alzheimer’s, caused by misfolded proteins. Computational load remains a challenge.

- Source: Towards protein folding pathways by reconstructing protein residue networks with a policy-driven model

Protein folding is like “folding complex origami instantly with unseen hands.” While previous AI was good at predicting the “finished origami,” this research attempts to observe “the process of folding.” If this is elucidated, the speed of finding new molecular targets to halt disease progression should increase dramatically.

Cross-Paper Observations

A common trend observed across the selected papers is AI’s rapid evolution from “static result generation” to “dynamic process control.” Behavioral shift detection (Paper 1) and fast-slow planning (Paper 2) in robotics demand real-time adaptability assuming environmental changes. Similarly, the simulation of folding pathways in life sciences (Paper 5) focuses on a dynamic understanding of “process.”

On the other hand, economic perspectives (Papers 3, 4) highlight the risk of “mental shortcuts” brought about by AI-driven efficiency. As AI technology becomes more autonomous and sophisticated, a greater emphasis on the human side’s “stance of understanding the dynamic processes behind AI-generated results” will be required. Technology is reaching a phase where it redefines human involvement not just in providing answers, but in the very process of solving questions.

References

| Title | Source | URL |

|---|---|---|

| Belief Dynamics for Detecting Behavioral Shifts | arXiv | https://arxiv.org/abs/2604.04967 |

| Bridging Large-Model Reasoning and Real-Time Control | arXiv | https://arxiv.org/abs/2604.01681 |

| When AI Improves Answers but Slows Knowledge Creation | arXiv | https://arxiv.org/abs/2604.00468 |

| Solving Problems of Unknown Difficulty | arXiv | https://arxiv.org/abs/2604.00156 |

| Towards protein folding pathways | arXiv | https://arxiv.org/abs/2604.04677 |

| VeloTree: Inferring single-cell trajectories | arXiv | https://arxiv.org/abs/2604.02380 |

| Oldest octopus fossil is no octopus at all | Royal Society | https://royalsocietypublishing.org/doi/10.1098/rspb.2026.0407 |

| New chip survives 1300F | ScienceDaily | https://www.sciencedaily.com/releases/2026/04/260407130545.htm |

This article was automatically generated by LLM. It may contain errors.